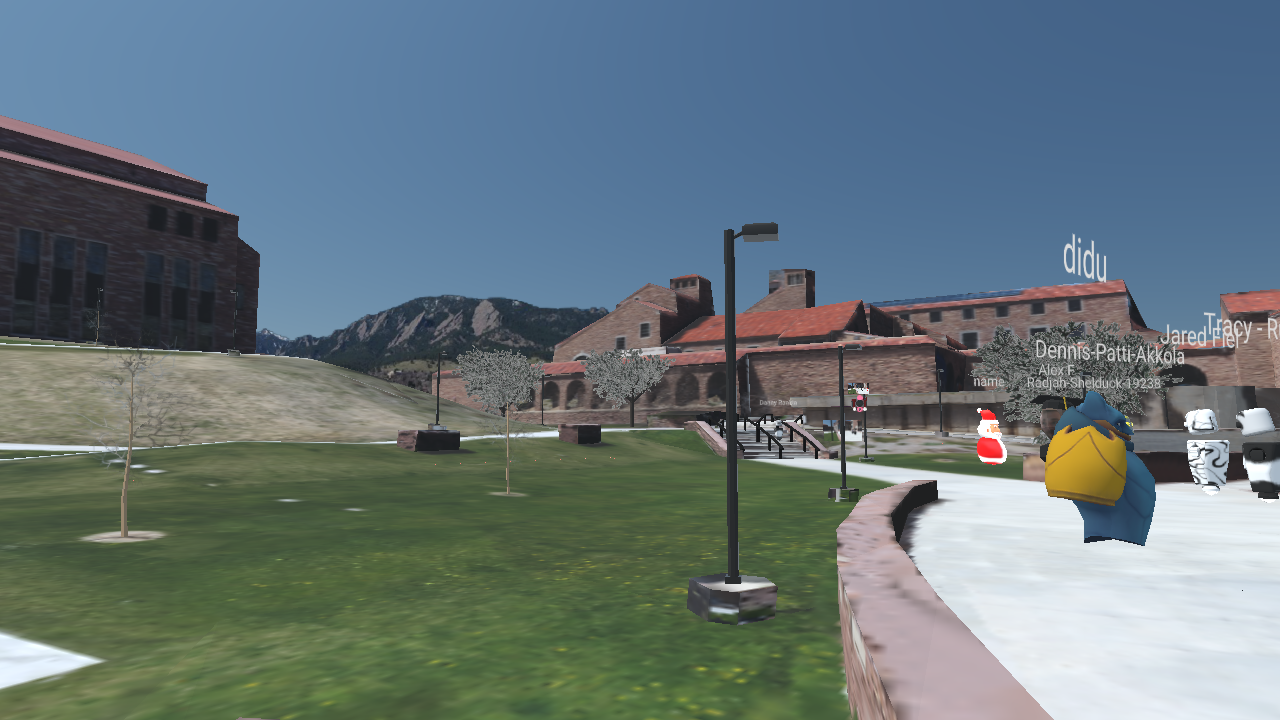

Screenshot from the final event.

concept

With my college graduation canceled because of COVID-19; faced with the alternative being a large Zoom call, it left me thinking about what we could do to approximate a proper graduation experience. I had an idea for an interactive, virtual event and sent a proposal to ATLAS faculty (below) .

The idea was to recreate the event location on a platform that allowed faculty, students and their families to "return" to campus one last time. I've used photogrammetry in the past, and was planning to use that for the playable area. That said, I was planning to use socket.io to create a simple Three.js multiplayer experience that allowed users to walk around and talk via text chat.

process

In doing research into how to create the multiplayer platform, I quickly came across Mozilla Hubs. They were already in the process of building the infrastructure for VRChat-style interactions in the browser. I decided to build around their platform which greatly reduced the workload really enabled the whole experience. That left me to focus on the avatar customizer and the custom map.

I started with the map. Never having used Blender before, and with YouTube as my guide, I learned the workflow for capturing and cleaning up photogrammetry scans in the software. I was looking into a couple paid paid photogrammetry software solutions, but ultimately chose to use the open-source Alice Vision Meshroom. I probably visited the area outside the Visual Arts Center a dozen times, each time returning with a new set of pictures to process. I learned something each time, and after upgrading from my mirrorless camera to a DJI Mavic Mini, I was able to get a highly detailed scan of the entire plaza.

Screenshot of the VAC Plaza reconstructed with AliceVision Meshroom.

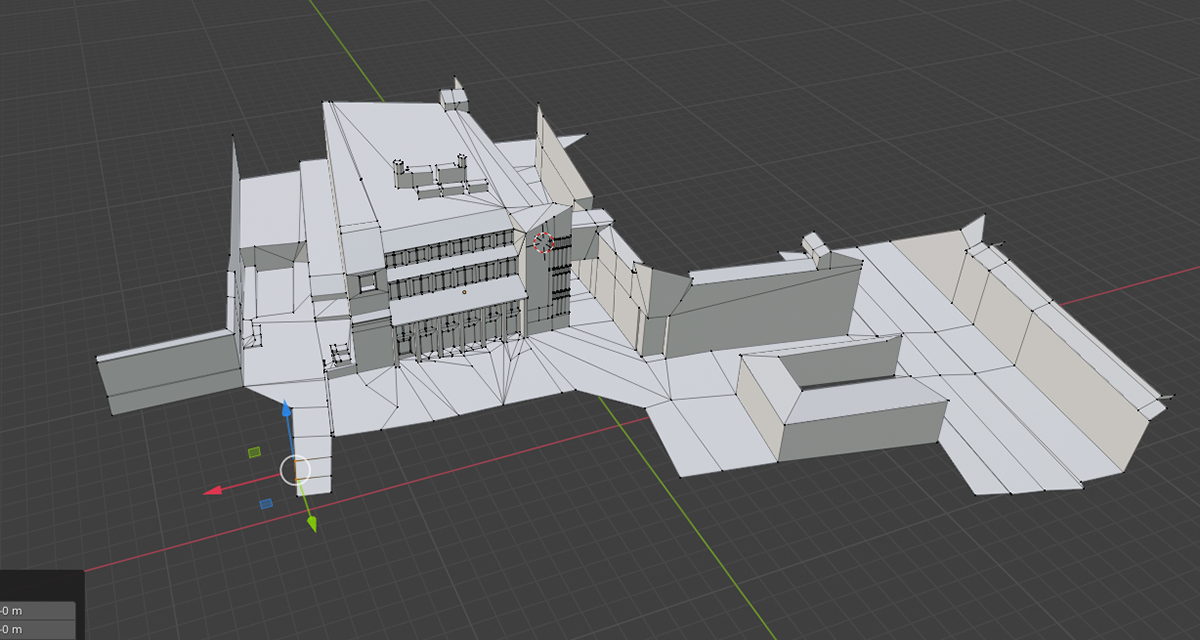

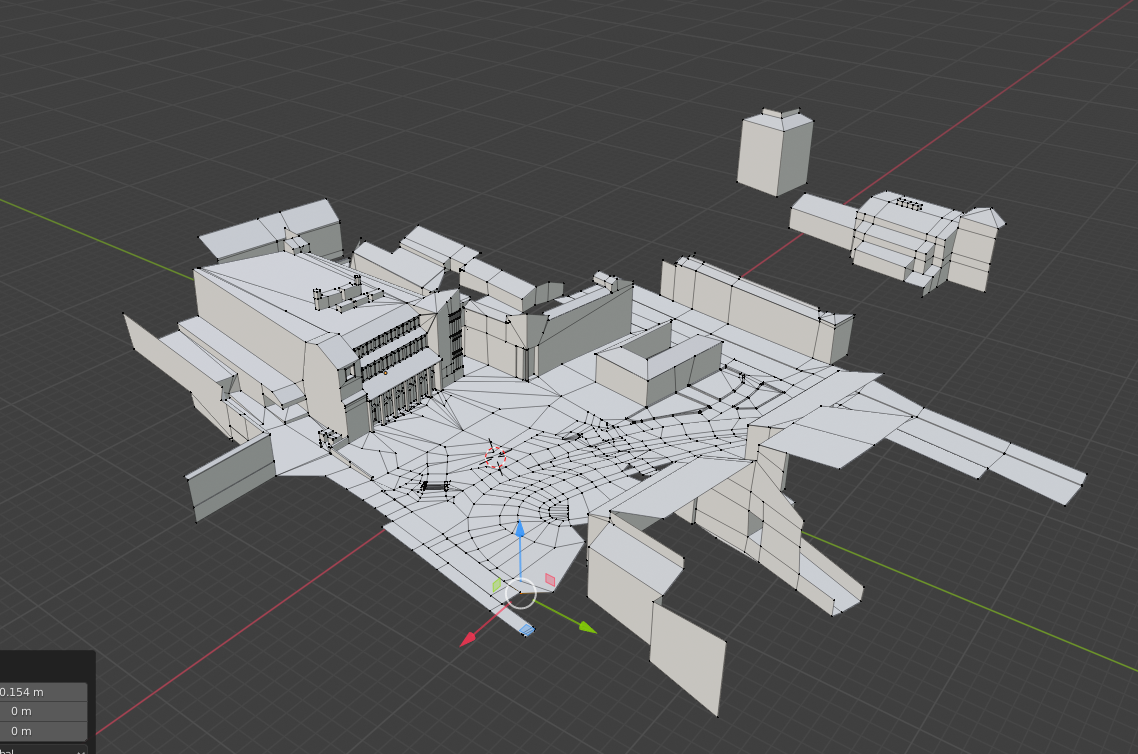

The model Meshroom produced was around a gigabyte in size; Mozilla Hubs recommends maps be no larger than 18MB. That's where Blender came in. Hours of retopology later, and after borrowing my friend's computer for to project the texture to the new model, I had a low-res map! The retopology process was really entertaining. I tried to optimize the scene as much as possible, that meant deleting any faces that would not be seen by the end users. From the sky, the map is a patchwork of disconnected meshes, but from the ground it looks like a complete scene! I separated out the textures into three levels of distance from the playable area, reducing the resolution at each step to save space. In the end, the GLTF I exported was a slick 16MB.

In progress rebuild of the buildings / terrain.

Courtyard mostly complete, UMC missing.

To decorate the scene I also learned how to model trees and a couple other basic objects.

ATLAS Banner

Large Tree

Lamppost

Pine Tree

Small Tree

Twiggy Tree

I separated out the retopologized model into three different distances ( close, mid, far ), and transferred over the texture from the Meshroom model. Further out I dropped the resolution of the texture to shrink the overall file size.

I assembled all the pieces in Mozilla Spoke ( the map-builder ) for Hubs and tried it out.

Demoing the map in Mozilla Hubs

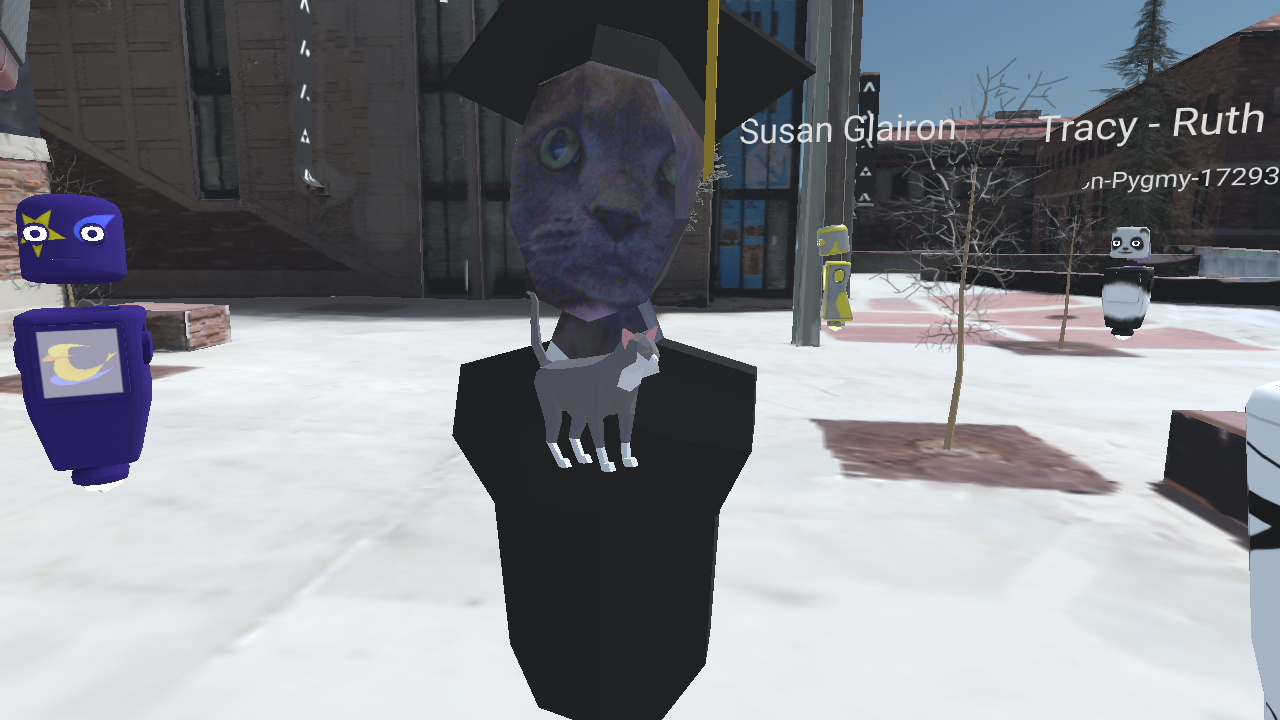

The last step was creating the avatar customizer. Hubs allows custom avatars uploaded by users. After creating a basic avatar shape and setting up the UVs I created the customizer using Three.js. Users can let the website access their webcam, and preview the avatar textured with the picture. Rather than seeing anonymous avatars, my peers would be able to see each other! As a little feature, I arranged the UV's for the sides of the head into a small area in the center of the forehead. Then, when a user takes their picture, the head is generally textured with a proper skin color! Then users can upload a square image to be textured on their cap. Lastly, the user downloads the model as a GLB and uploads it on Mozilla hubs.

results

The bulk of the program was live-streaming a pre-recorded message, then afterwards viewers were given links to Mozilla Hubs rooms.

Avatar customization was definitely an extra step users had to go through but contributed a lot to the overall experience. We still had plenty of robots and pandas around, but graduates were easy to spot in the crowd.

All in all, we only had around 50-70 users pop in and out (4 rooms capped at 50 users each were set up, only the first two were used), dwindling out after around 3hrs. The whole thing was fairly tame until people figured out they could type "/grow" in the chat to make their avatars larger... The reactions (at least the ones I heard) were really positive! People were walking around talking to each other and able to find friends in the crowd. Proximity-based voice chat had users congregating in groups as they would normally, which was really cool to see! Even our major's counselor was walking between groups greeting people and saying goodbye. Everyone understood that we couldn't offer a 1:1 replacement for the in-person ceremony but they were happy to see each other one last time.

After our event, I heard about another event done in the same fashion was received less favorably.

Some thoughts on what could be improved / lessons learned.

1. ROOM CAP

Though the event was successful, it could have benefited from larger room caps. That's a fairly challenging problem, though, as we had 50+ people in a room where Mozilla says you should keep it below 30. Bandwidth is spread thin and performance drops, especially with dozens of sources of positional audio. That's an issue closer to the core of Mozilla Hubs but also browser capabilities.

2. CLEANER MAP

I learned a lot about map building ( & Blender ) through the process. The final map is fairly detailed, but textures fall apart on close inspection. With what I know now about UV mapping & texturing, with enough time I could add a lot of detail to the environment. I've also since learned better retopology / texture projection methods that could help further.

3. VIDEO GUIDES

Most people were able to enter the virtual graduation easily enough, that said, making video tutorials for some processes ( uploading avatar files, accepting mic / video permissions, movement / navigation ) could have eased some people's experiences.

EVENT PHOTOS / VIDEO